When developing a web app, I like to refer to the twelve factor app recommendations. Their approach to handling application logging is in the eleventh “commandment”:

It should not attempt to write to or manage logfiles. Instead, each running process writes its event stream, unbuffered, to stdout.

It seems that the folks at Docker also read this manifesto and thus provided us with great tools to manage logs produced by applications in containers. In this tutorial we are going to see how to use those tools to collect logs and manage them with Elasticsearch + Logstash + Kibana (aka ELK).

What you’ll learn

In this post I’ll cover:

- A quick description of ELK.

- How to create the machines you need on Exoscale account: one to host the ELK stack and a second to host a demo web application.

- How to start ELK using Docker compose.

- How to use the Docker logging driver to route the web application logs to ELK.

Prerequisites

Mac users: you’ll need the latest Docker Desktop.

Linux users: you’ll need Docker engine, machine and compose.

On both systems you’ll need to install Exoscale’s cs tool, which you can grab with the Python ecosystem’s pip:

pip install cs

We’ll use docker-machine to create the instances we need on Exoscale and then cs to modify the security group to allow connections to our newly installed ELK stack.

cs and docker-machine both relying on the Exoscale API, so they will need to access your API credentials.

Export the credentials in your shell with the following:

export EXOSCALE_ACCOUNT_EMAIL=<your exoscale account email>

export CLOUDSTACK_ENDPOINT=https://api.exoscale.ch/compute

export CLOUDSTACK_KEY="<your exoscale API Key>"

export CLOUDSTACK_SECRET_KEY="<your exoscale Secret Key>"

Lastly, you’ll need a few files that I’ve provided in a git repo. Clone it locally:

git clone https://github.com/MBuffenoir/elk.git

cd elk

First up, what is ELK?

ELK is a collection of open source tools, maintained by Elastic.co that combines:

- Elasticsearch: an indexer and search engine that we will use to store our logs.

- Logstash: a tool that collects and parses the logs, then stores them in Elasticsearch indexes.

- Kibana: a tool that queries the Elasticsearch REST API for our data and helps to create insightful visualizations.

Creating instances

Let’s use docker-machine to create an Ubuntu instance on Exoscale that will host ELK:

docker-machine create --driver exoscale \

--exoscale-api-key $CLOUDSTACK_KEY \

--exoscale-api-secret-key $CLOUDSTACK_SECRET_KEY \

--exoscale-instance-profile small \

--exoscale-disk-size 10 \

--exoscale-security-group elk \

elk

This will take a little time to run and it will give you a result similar to this:

Running pre-create checks...

Creating machine...

(elk) Querying exoscale for the requested parameters...

(elk) Security group elk does not exist, create it

[...]

Setting Docker configuration on the remote daemon...

Checking connection to Docker...

Docker is up and running!

For this demo I’ve mostly used the default parameters, but of course you can customize this to fit your needs. Take a look at the driver documentation to learn more. You can also scale up your instance later (either manually through the web interface or using cs and the Exoscale API).

At this point you should be able to connect to the remote Docker daemon with:

eval $(docker-machine env elk)

Let’s make sure that everything is okay:

docker version

docker info

Now let’s create a second machine on which we will run the demo application:

docker-machine create --driver exoscale \

--exoscale-api-key $CLOUDSTACK_KEY \

--exoscale-api-secret-key $CLOUDSTACK_SECRET_KEY \

--exoscale-instance-profile tiny \

--exoscale-disk-size 10 \

--exoscale-security-group demo \

demo

docker-machine has already opened all the necessary ports for you to connect to your new remote Docker daemon. You can review those ports in the newly created elk security group.

Now you need to open the ports necessary to access your running applications and have them communicate together.

Let’s use cs to do that. We’l; start with opening the port to connect to Kibana:

cs authorizeSecurityGroupIngress protocol=TCP startPort=5600 endPort=5600 securityGroupName=elk cidrList=0.0.0.0/0

Now let’s enable our application to send its logs to Logstash:

cs authorizeSecurityGroupIngress protocol=UDP startPort=5000 endPort=5000 securityGroupName=elk 'usersecuritygrouplist[0].account'=$EXOSCALE_ACCOUNT_EMAIL 'usersecuritygrouplist[0].group'=demo

And finally, we’ll open port 80 to our demo web server:

cs authorizeSecurityGroupIngress protocol=TCP startPort=80 endPort=80 securityGroupName=demo cidrList=0.0.0.0/0

Launching ELK

Now that our instances and security group are ready to use, it is time to launch ELK.

First, you will need to upload the configuration files needed by ELK. We’ll do that with:

docker-machine scp -r conf-files/ elk:

Note that the certificate used here is self-signed. If you prefer you can replace or regenerate it with:

sudo openssl req -x509 -nodes -days 365 -newkey rsa:2048 -keyout ./kibana-cert.key -out ./kibana-cert.crt

Now you can connect to your remote Docker daemon and start ELK with:

eval $(docker-machine env elk)

docker-compose -f docker-compose-ubuntu.yml up -d

Enjoy the beauty of a job well done with:

docker-compose -f docker-compose-ubuntu.yml ps

Which should give you an output similar to this one:

Name Command State Ports

------------------------------------------------------------------------------------------

elasticdata /docker-entrypoint.sh chow ... Exit 0

elasticsearch /docker-entrypoint.sh elas ... Up 9200/tcp, 9300/tcp

kibana /docker-entrypoint.sh kibana Up 5601/tcp

logstash /docker-entrypoint.sh -f / ... Up 0.0.0.0:5000->5000/udp

proxyelk nginx -g daemon off; Up 443/tcp, 0.0.0.0:5600->5600/tcp, 80/tcp

You can see here the three applications that constitute the ELK stack and a reverse nginx proxy that secures them.

Running a demo web server and sending its logs to ELK

Let’s use Docker again to run a simple nginx web server that will simulate a typical web application:

eval $(docker-machine env demo)

docker run -d --name nginx-with-syslog --log-driver=syslog --log-opt syslog-address=udp://$(docker-machine ip elk):5000 -p 80:80 nginx:alpine

Ensure your web server is running properly with:

docker ps

Connect to it with your favorite web browser or by using curl:

curl http://$(docker-machine ip demo)

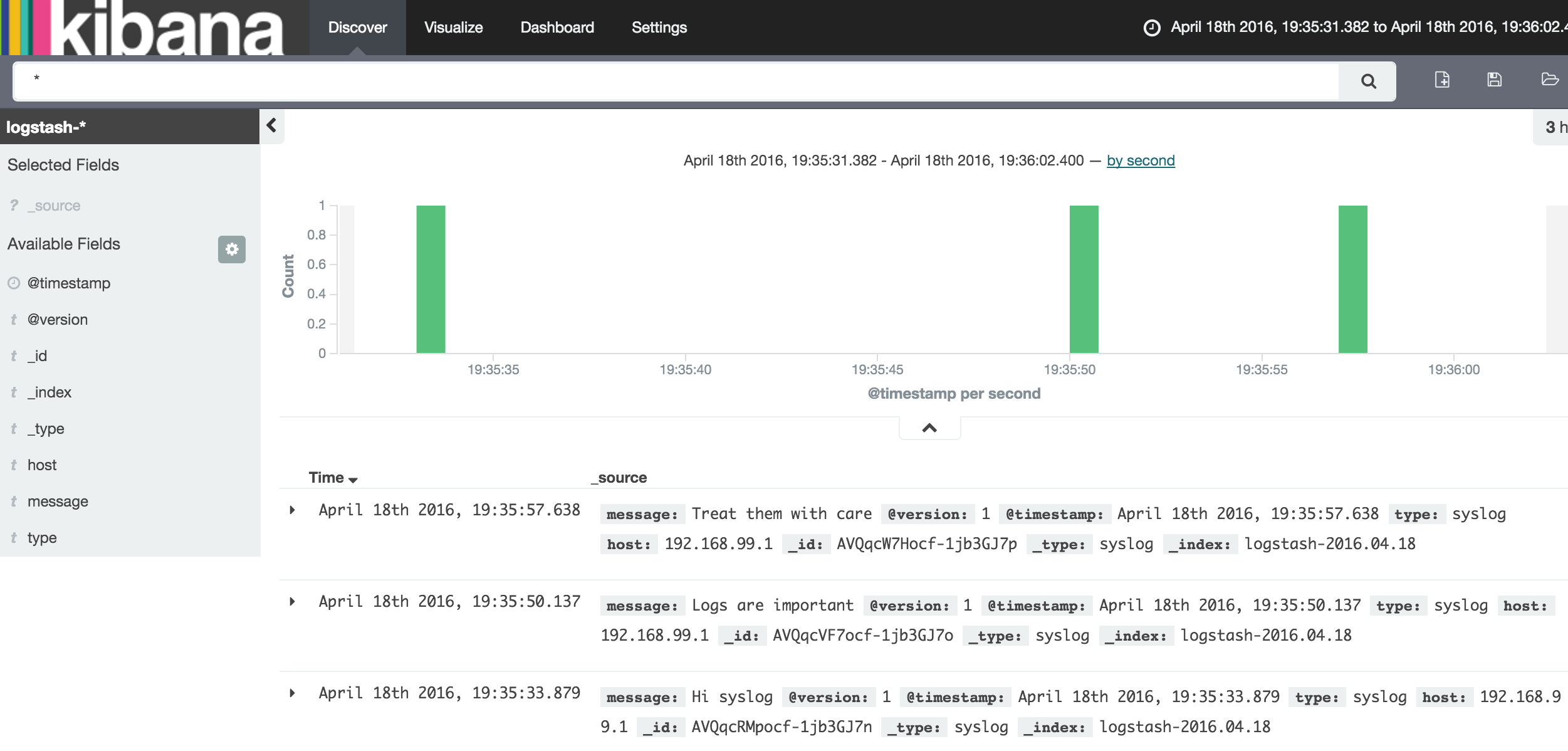

Using Kibana to view the logs

Now is the moment you’ve been waiting for!

Browse to your ELK installation: https://<elk instance ip>:5600

The default username and password are admin / Kibana05.

As this is the first you’re connecting to it, it will take a few seconds to get set up.

Once it’s ready, create your first index by hitting the green create button and then the Discover tab. You should now be able to see the logs of your nginx demo web server:

Conclusion

Here we’ve seen here the minimum you need to collect logs with ELK and Docker.

The next steps might be to enhance your Logstash configuration to detect special fields in your logs, maybe prune some sensitive information and, of course, create nice visualizations in Kibana. We can take a look at that another time.

Have fun collecting logs!